From February 25 to 27, the 23rd USENIX Conference on File and Storage Technologies (FAST 2025) was held in Santa Clara, USA. A paper titled “Mooncake: Trading More Storage for Less Computation — A KVCache-centric Architecture for Serving LLM Chatbot,” co-authored by a research team led by Professors Mingxing Zhang, Yongwei Wu, and Weimin Zheng from the Department of Computer Science and Technology and Moonshot AI, won the Erik Riedel Best Paper Award. The paper’s first author is Ruoyu Qin, a PhD student from the Department of Computer Science and Technology, advised by Assistant Professor Mingxing Zhang.

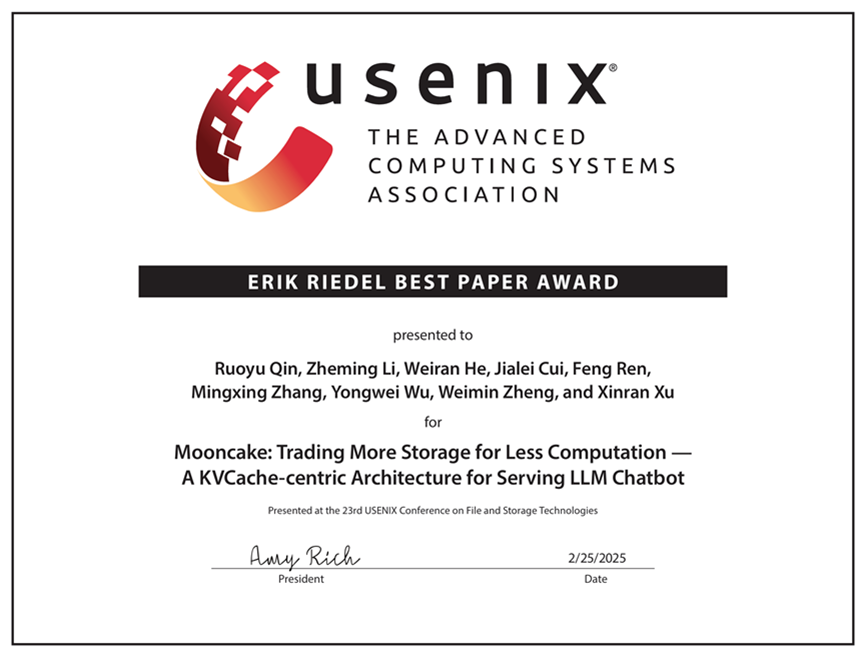

Best Paper Award presented at the conference

The paper introduces Mooncake, the serving platform for Kimi, an LLM service provided by Moonshot AI. Mooncake uses a KVCache-centric disaggregated architecture that not only separates prefill and decoding clusters but also takes advantage of underutilized CPU, DRAM, SSD, and NIC resources in the inference cluster to form a distributed KVCache pool. Central to Mooncake is its global cache pool and KVCache-aware scheduler, designed to maximize throughput under strict latency-related service level objectives (SLOs).

Experiments show that Mooncake excels in handling long-context inputs. In tests with real-world workloads, compared to baseline methods, Mooncake improves the effective request capacity by 59% to 498% while meeting SLO requirements. Currently, Mooncake is deployed on thousands of nodes, processing over 100 billion tokens per day. In practical scenarios, its innovative architecture has enabled Kimi to handle 115% and 107% more requests on NVIDIA A800 and H800 clusters, respectively.

FAST is a premier academic conference in the field of computer storage. Over the past two decades, it has played a significant role in shaping storage technologies. With its low acceptance rate and high publication standards, it is recognized by the China Computer Federation as a Class A international academic conference for storage systems.

Editor: Li Han